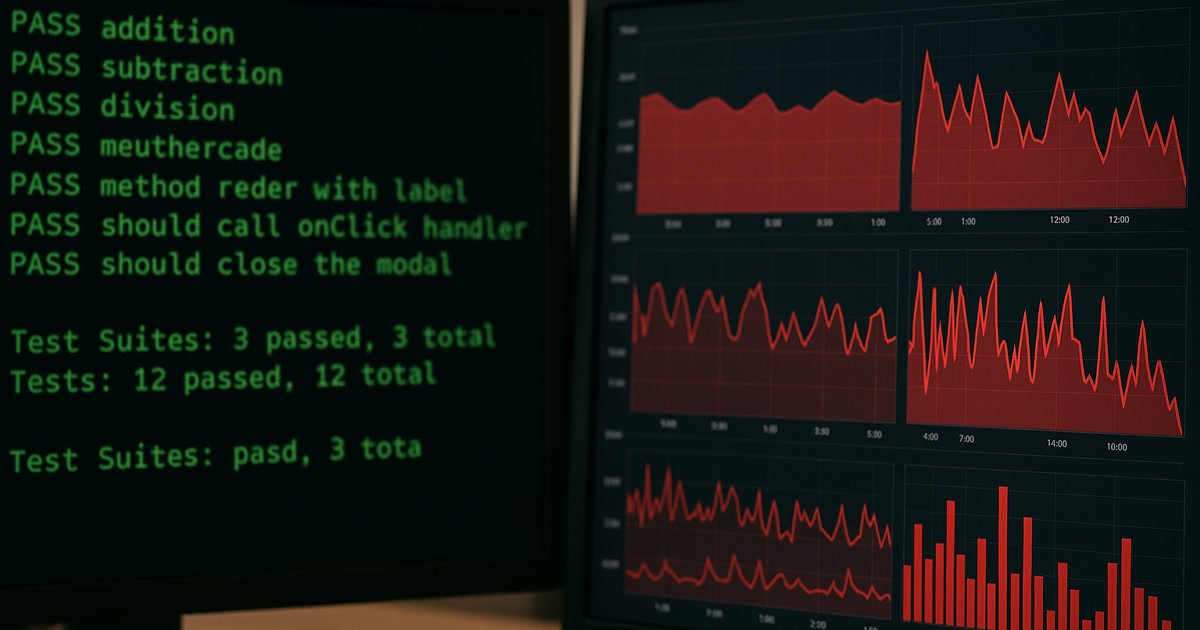

Last week, we reviewed a codebase where AI had written every single unit test. Coverage was at 98%. Every test passed. The CI/CD pipeline was green across the board.

The app crashed within an hour of deployment.

Not a flaky test issue. Not an edge case. A fundamental misunderstanding of what the code was supposed to do — baked into every test the AI wrote.

The Setup

A development team was building a pricing engine for an e-commerce platform. The function calculated discounts based on cart value, customer tier, and active promotions. Standard stuff.

They used AI to generate the unit tests. The AI looked at the function, understood the logic, and wrote comprehensive tests covering every branch and condition.

Here's what the test suite validated:

- Cart value thresholds triggered the correct discount percentages

- Customer tier multipliers applied correctly

- Promotion codes stacked properly

- Edge cases like empty carts and expired promotions were handled

Every test passed. 98% coverage. Green pipeline. Ship it.

The Break

Within an hour of going live, customer support started getting tickets. Customers in the loyalty program were seeing higher prices than guest users.

The bug was in the tier multiplier logic. The function applied the loyalty discount before calculating the promotional discount, which meant the promotional percentage was calculated on the already-reduced price. Guest users got the promo discount on the full price — a better deal.

The math was technically correct. The business logic was wrong.

Why AI Missed It

Here's the thing: the AI tested exactly what the code did. And the code did exactly what it was written to do. The problem wasn't in the implementation — it was in the specification.

The AI had no way to know that loyalty customers should always get the better deal. That's not a technical requirement. It's a business rule that lives in someone's head, in a Slack conversation from six months ago, or in a product spec that was never updated.

AI tests what the code does. It doesn't test what the code should do. That distinction is everything.

The Pattern We Keep Seeing

This isn't an isolated case. We see this pattern repeatedly:

1. AI tests mirror the code's assumptions. If the code has a flawed assumption, the tests will validate that flaw. The AI doesn't question whether the assumption is correct — it just verifies the implementation matches.

2. AI doesn't understand business context. "Loyalty customers should always pay less than guests" is obvious to anyone who's worked in e-commerce for five minutes. It's invisible to an AI reading a function signature.

3. High coverage creates false confidence. 98% coverage sounds impressive. But coverage measures which lines of code were executed during testing. It says nothing about whether the right behavior was tested. You can have 100% coverage and 0% of your business rules validated.

4. The tests become documentation of bugs. When AI writes tests that pass against buggy code, those tests now protect the bug. Future developers see passing tests and assume the behavior is correct. The bug becomes an undocumented "feature."

What Actually Works

We're not saying don't use AI for testing. We use it constantly. But there's a specific way to do it that avoids these traps:

Write business-rule tests by hand first. Before AI touches anything, a human who understands the business writes tests that describe what should happen. "A loyalty customer's final price should always be less than or equal to a guest's price for the same cart." That's a test AI would never write on its own.

Use AI for coverage, not correctness. AI is excellent at finding untested branches, generating edge cases, and filling coverage gaps. Let it do that — after the human-written business tests are in place.

Review AI-generated tests like you review AI-generated code. Don't assume passing tests mean correct tests. Read them. Ask: "Does this test validate what our users expect, or just what the code happens to do?"

Test the boundaries, not just the branches. The pricing bug lived at the boundary between two features (loyalty discounts and promotional discounts). AI tested each feature independently. A human would have asked: "What happens when both apply?"

The Real Cost

The pricing bug was caught within an hour. The fix took twenty minutes. But the real cost wasn't the fix — it was the investigation.

The team spent three hours tracing the issue because the test suite said everything was fine. They doubted their monitoring. They doubted their deployment. They doubted their infrastructure. The last thing they doubted was the tests, because the tests were "comprehensive."

That's the hidden cost of false confidence. When your safety net has holes you can't see, you waste time looking everywhere except where the problem actually is.

The Takeaway

AI-generated tests are a productivity tool, not a quality guarantee. They tell you whether your code does what your code does. They don't tell you whether your code does what your business needs.

The question isn't "do my tests pass?"

It's "do my tests test the right things?"

And that question still requires a human who understands what "right" means in your specific context.