What a $340K Code Review Mistake Looks Like (and Why Your CI/CD Pipeline Didn't Catch It)

A developer on a mid-size e-commerce team pushes a pull request on a Friday afternoon. The code looks clean. All 47 unit tests pass. The CI/CD pipeline runs green across the board. Linting, type checking, automated security scans, all clear. The PR gets merged without a human architect reviewing the business logic.

By Monday morning, the discount engine has been applying a 15% promotion to every order, not just orders over $200 as the business rules specified. Over the weekend, 4,200 orders shipped at the wrong price. The total revenue loss: $340,000.

The code worked exactly as written. It just didn't work as intended. And no automated test caught the difference.

Why Automated Testing Has a Blind Spot

CI/CD pipelines are built to answer a specific question: "Does this code do what the code says it should do?" They're excellent at catching syntax errors, type mismatches, broken imports, and regressions against existing test cases.

What they can't answer is a different question entirely: "Does this code do what the business needs it to do?"

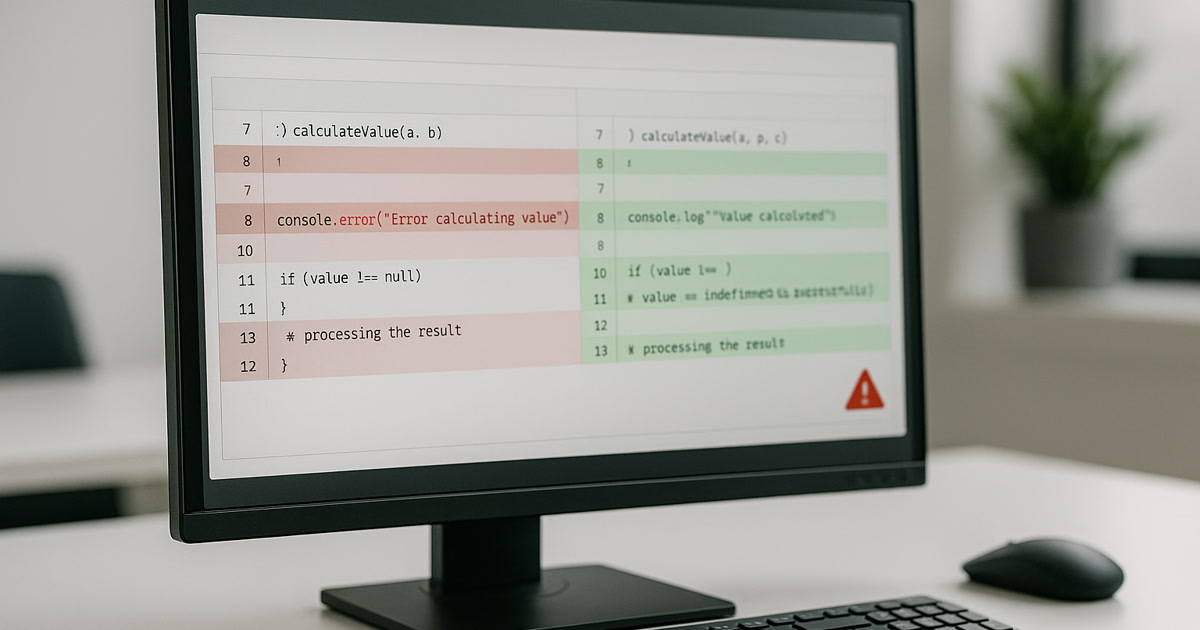

Business logic errors live in the gap between those two questions. A discount that applies to all orders instead of qualifying orders isn't a bug in the traditional sense. The code runs without errors. The function returns a value. The tests, if they were written to match the code rather than the business requirement, pass perfectly.

According to research on business logic vulnerabilities, 27% of API attacks in 2023 were business logic attacks, a category that's growing year over year. HackerOne's data shows that 45% of their total bounty awards go toward business logic errors, reflecting how hard these flaws are to catch with automated tools and how much damage they cause when they slip through.

The $2.41 Trillion Context

The Consortium for Information & Software Quality (CISQ), in a report co-sponsored by Synopsys, estimated that poor software quality cost the U.S. economy $2.41 trillion in 2022. That number includes cybercrime losses, technical debt, and software failures. A significant portion comes from bugs that reach production because they weren't caught during development.

The IBM Systems Sciences Institute research showed that fixing a bug in production costs roughly 100 times more than fixing it during the design phase. A $100 fix during requirements gathering becomes a $10,000 fix in production when you factor in incident response, customer impact, rollback procedures, and lost revenue.

This cost multiplier is why code review isn't just a development practice. It's a financial control.

What CI/CD Actually Tests (and What It Doesn't)

Here's a breakdown of what your typical CI/CD pipeline catches versus what slips through:

Your pipeline catches: Syntax errors, type errors, failed imports, regression against existing tests, known security vulnerability patterns, code style violations, dependency conflicts.

Your pipeline misses: Incorrect business rules, wrong conditional logic that still runs cleanly, missing edge cases that nobody wrote tests for, permission logic that works for most users but fails for specific roles, calculations that produce plausible but wrong numbers, data handling that works in test environments but fails with production-scale data.

The second list is where the expensive mistakes live. And AI-generated code makes this worse because AI tools optimize for code that works, not code that's correct from a business perspective.

AI Code Makes This Problem Worse

The 2024 DORA report found that 39% of surveyed professionals reported little to no trust in AI-generated code. That mistrust exists for good reason.

AI coding tools generate code that passes automated checks at high rates because they're trained on patterns that compile, run, and satisfy type systems. But they don't understand your business rules. They don't know that your discount engine should only apply to orders over $200. They don't know that your user permission model has a special case for legacy accounts. They don't know that your pricing API rounds differently than your invoicing system.

GitClear's analysis of 211 million lines of code found that code refactoring dropped from 24.1% of code changes in 2020 to just 9.5% in 2024. Meanwhile, copy-pasted code surged by 48%. That pattern, less refactoring and more copying, is a recipe for business logic errors. When developers paste AI-generated functions without restructuring them to fit the existing system's rules, the technical output works but the business outcome doesn't.

A CodeRabbit analysis reported that AI-assisted pull requests had 1.7 times more issues than human-authored ones. These aren't syntax errors. They're the kind of issues that require a human reviewer who understands the system's intent to catch.

The Knight Capital Warning

If you think a $340,000 discount error sounds bad, consider Knight Capital Group. On August 1, 2012, a deployment error left old testing code active on one of eight servers. In 45 minutes, that code executed over 4 million erroneous trades across 154 stocks. The loss: $440 million. The company never recovered and was acquired months later.

Knight Capital's disaster wasn't caused by AI. But it illustrates the same principle at work: code that functioned as written but didn't function as intended, and automated systems that couldn't tell the difference.

The parallel to today's AI-generated code is direct. When AI writes a function, it works. It compiles, runs, and returns output. The question isn't whether it works. The question is whether it does the right thing. And answering that question requires someone who understands the business context, not just the code.

What Architect Review Actually Catches

A senior architect reviewing code brings something automated tools can't: context. They know:

- How this feature connects to the billing system

- What edge cases have caused problems before

- Which business rules have exceptions that aren't documented in the code

- Where the integration points are between systems that were built at different times with different assumptions

Academic research suggests that formal code reviews catch roughly 60% of defects, while a Cisco study found that code reviews during development reduced bugs by about 36%. But the real value isn't in the percentage. It's in the type of defects caught.

Automated tests catch predictable failures. Human review catches the unpredictable ones, the business logic errors where the code does something reasonable but wrong.

For the $340K discount error, an architect review would have asked one question: "Where does this function check the order minimum?" That single question would have caught the bug before it shipped.

The Real Cost of Skipping Review

When teams skip architect review on AI-generated code, they're making an implicit bet: "The AI probably got the business logic right." That bet pays off most of the time. AI-generated code works correctly in many cases, especially for straightforward CRUD operations and standard patterns.

But "most of the time" isn't good enough when a single miss costs $340,000. Or when 72% of organizations report experiencing a production incident tied to AI-generated code.

The cost of architect review is measurable: an hour or two per significant PR. The cost of skipping it is also measurable, but usually after the damage is done.

Building a Review Process That Catches Business Logic Errors

Automated testing and human review aren't competing approaches. They handle different failure modes. Here's how to structure a process that covers both:

Keep your CI/CD pipeline. Automated tests still catch regressions, syntax issues, and known vulnerability patterns. Don't remove them. Add to them.

Flag AI-generated code for mandatory architect review. Any PR where AI wrote significant business logic should require sign-off from someone who understands the domain, not just the code.

Write tests from requirements, not from code. The $340K bug would have been caught by a test that said "orders under $200 should not receive the 15% discount." That test comes from the business requirement, not from reading the AI-generated code and testing whether it does what it does.

Add business rule validation to your test suite. Create a specific category of tests that verify business rules independently of implementation. These tests survive refactoring because they test outcomes, not code paths.

Review AI-generated code with more scrutiny, not less. The speed at which AI produces code makes it tempting to speed up reviews too. Resist that. Faster code generation requires more careful review, not less.

Frequently Asked Questions

Can AI code review tools replace human architect review? Not for business logic. AI code review tools are good at catching style issues, potential security vulnerabilities, and common patterns that lead to bugs. But they can't verify whether your discount applies to the right orders or whether your permission model handles legacy accounts correctly. Those checks require someone who understands the business, not just the code.

How much time should architect review add to the development process? For most pull requests, an hour or two. For complex features involving payment, permissions, or data migration, budget half a day. Compare that to the cost of a production incident. A $340K mistake makes a half-day of review look like very cheap insurance.

What's the best way to test business logic specifically? Write tests directly from business requirements documents, not from the code itself. If the requirement says "discounts apply only to orders over $200," write a test that submits a $150 order and verifies no discount is applied. Keep these tests in a separate suite labeled "business rules" so they're maintained alongside requirement changes, not code changes.

Sources

- $2.41 trillion cost of poor software quality: CISQ/Synopsys, "The Cost of Poor Software Quality in the US: A 2022 Report" (December 2022).

- Production bugs cost 100x more than design-phase bugs: IBM Systems Sciences Institute, cited in multiple industry analyses including Perforce.

- 27% of API attacks were business logic attacks: Cited in business logic vulnerability research at Outpost24 (2023 data).

- 45% of HackerOne bounty awards go to business logic errors: HackerOne, "How a Business Logic Vulnerability Led to Unlimited Discount Redemption".

- 25% AI adoption increase linked to 7.2% delivery stability decrease: Google Cloud, 2024 DORA Report (2024).

- 39% reported little to no trust in AI-generated code: Google Cloud, 2024 DORA Report (2024).

- Code refactoring dropped from 24.1% to 9.5%: GitClear, "Coding on Copilot: Data Shows AI's Downward Pressure on Code Quality" (2024).

- AI-assisted PRs have 1.7x more issues: CodeRabbit, "State of AI vs. Human Code Generation Report".

- Knight Capital $440M loss in 45 minutes: Forbes, "Knight Capital Trading Disaster Carries $440 Million Price Tag" (August 2012).

- Formal code reviews catch ~60% of defects: Academic consensus cited in multiple software engineering analyses including Graphite.

- Cisco study: code reviews reduced bugs by 36%: Cisco Systems code review study, cited in GlowTouch.